Lots of you reading this post might have a preconceived notion of how much data a typical Sales Cloud or Force.com app can handle. The truth is that as salesforce.com popularity has skyrocketed, so too has the size of databases underlying custom and standard app implementations on our cloud platforms. It might surprise you to learn that our team works regularly with customers that have large Force.com objects upwards of 10 million records.

Lots of you reading this post might have a preconceived notion of how much data a typical Sales Cloud or Force.com app can handle. The truth is that as salesforce.com popularity has skyrocketed, so too has the size of databases underlying custom and standard app implementations on our cloud platforms. It might surprise you to learn that our team works regularly with customers that have large Force.com objects upwards of 10 million records.

Best Practices for Large Data Volumes on Salesforce

Being successful with large data volumes on Force.com requires a knowledge of and implementation according to many best practices around data management. For example, here are a couple examples.

| Problem | Best Practices |

| Slow report on a large object |

|

| Slow bulk data load |

|

Some best practices are simple, while others are a bit more challenging. In any case, I’m not going to list all such best practices for Force.com data management here in this post. For a complete list, please read Best Practices for Deployments with Large Data Volumes.

Distributed Apps and Big Data

Every individual application platform has practical limits, and that’s when distributed processing make sense. By integrating different systems together to support a single app, you can scale your app to new heights.

Just as with other platforms, individual components within the Salesforce Platform each have practical limits, which shouldn’t surprise you. But the beauty of the platform is that it’s very easy to integrate different components, share state, authentication, and access controls, and thus support tremendous amounts of data for even the most demanding big data app.

The Power of the Salesforce Platform

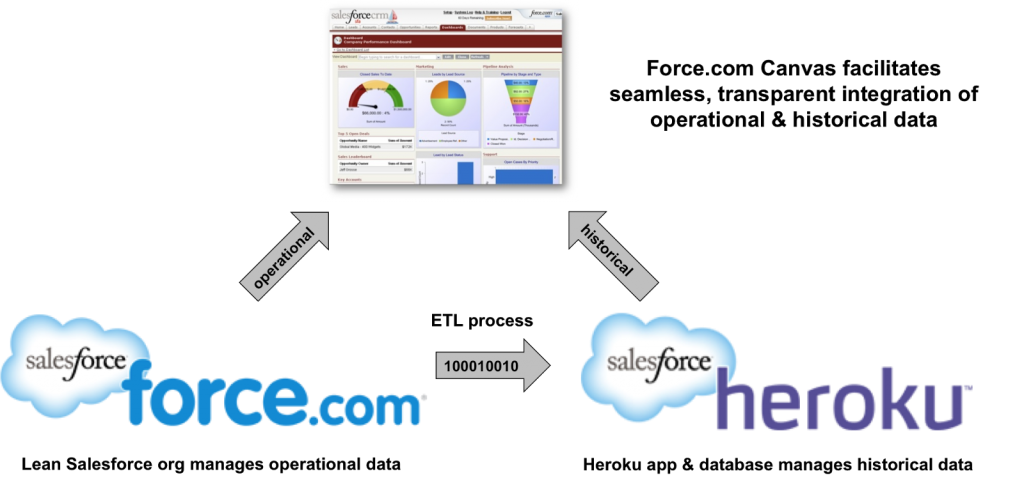

To realize what’s possible with the Salesforce Platform for managing large amounts of data, consider the scenario in the following illustration.

To maintain lightning fast access to operational data in the Sales Cloud, you can create an app on Heroku that manages historical data. You keep your Sales Cloud org lean, are in compliance with your record keeping requirements, and can optimize the Heroku app and database to deliver the analytics you need on those historical records.

On top of it all, you glue together the UIs of your two apps using Force.com Canvas. This technology lets you embed the UI of a remote app within a Force.com app or customized Salesforce app, such that users don’t even realize they are getting data from outside.

Pretty cool and relatively easy to implement. Don’t believe me? See for yourself.

Upcoming Webinar

On February 20, Bud Vieira and I will be doing a webinar, Extreme Salesforce Data Volumes, that’ll explore and demonstrate everything I just mentioned in this post. We’ll cover a plethora of data management best practices and wrap things up with a demo that integrates Sales Cloud with a Heroku app that exposed using Force.com Canvas. There’ll be some great takeaways from the webinar, including a new cheat sheet and downloadable source code that you can use to see how our demo is built. Perhaps you’ll even use it to kick start your own big data solution on the Salesforce Platform!

Related Resources

- Best Practices for Deployments with Large Data Volumes

- Force.com Canvas

- Heroku

- Architect Core Resources

About the Author and CCE Technical Enablement

Steve Bobrowski is an Architect Evangelist within the Salesforce Customer Centric Engineering group. The team’s mission is to help customers understand how to implement technically sound Salesforce solutions. Check out all of the resources that this team maintains on the Architect Core Resources page of Developer Force.