In February, Bud Vieira and I delivered the Extreme Salesforce Data Volumes webinar. If you didn’t catch the live webinar, click on the previous link and watch the recording to:

In February, Bud Vieira and I delivered the Extreme Salesforce Data Volumes webinar. If you didn’t catch the live webinar, click on the previous link and watch the recording to:

- Explore best practices for the design, implementation, and maintenance phases of your app’s lifecycle.

- Learn how seemingly unrelated components can affect one another and determine the ultimate scalability of your app.

- See live demos that illustrate innovative solutions to tough challenges, including the integration of an external database using Force.com Canvas.

- Walk away with practical tips for putting best practices into action.

Salesforce Platform Demo App: Force.com Canvas plus Heroku

The tail end of the webinar uses a sample app to demonstrate several of the best practices that Bud and I covered. Back in February, I promised everyone that I’d put together an article that teaches you how to implement the sample app from the ground up. That article, Extreme Salesforce Data: Distributed Application Partitioning with Force.com Canvas and Heroku, is now available.

What You Will Learn

The Extreme Salesforce Data Volumes webinar explains an important best practice for Salesforce organizations that contain a lot of data:

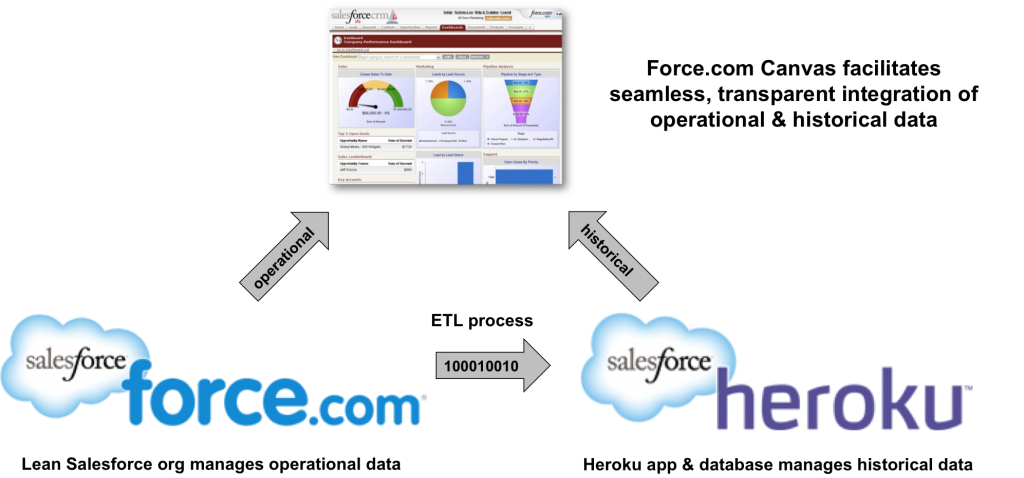

Keep the operational data set in your org as lean as possible by archiving “historical” data elsewhere.

When you follow this best practice, operational database access operations have to consider less data and can execute more efficiently than they otherwise would with a larger data set.

Practically speaking, there are multiple approaches that you can use to implement this best practice. You might simply create objects in your Salesforce org to store historical information and build analytics around these objects, or you could migrate historical data to an external system that’s specifically designed for data analytics such as a data warehouse. The choice is yours and depends on your specific use case.

Extreme Salesforce Data: Distributed Application Partitioning with Force.com Canvas and Heroku is a tutorial that shows you how you can tackle the latter approach. It explains how to implement an external application to store historical records and then integrate it’s user interface (UI) and user authentication into Salesforce so that your users can analyze historical data right alongside operational data. The article uses a combination of Salesforce Platform technology, including Force.com, Heroku, and Force.com Canvas.

Read this article to learn how to:

- Create a Ruby on Rails app to store historical records from Salesforce.

- Deploy the app on Heroku.

- Integrate the app’s UI and user authentication into Salesforce using Force.com Canvas.

Along the way, you’ll also learn a little bit about PostgreSQL, OAuth2, and an interesting data loading utility called Jitterbit Data Loader for Salesforce.

Disclaimer: The sample app in this article uses Heroku Postgres (runs on Amazon Web Services [AWS]) as a data store for historical Salesforce data in this demonstration. Carefully consider what data you archive and where you store this data to ensure that the underlying data center complies with your specific requirements.

Related Resources

- Salesforce Platform

- Force.com Canvas

- Heroku and Heroku Postgres

- Jitterbit Data Loader for Salesforce

- OAuth topic page on Developer Force

- Extreme Salesforce Data Volumes

- Best Practices for Deployments with Large Data Volumes

- Architect Core Resources

About the Author

Steve Bobrowski is an Architect Evangelist within the Technical Enablement team of the salesforce.com Customer-Centric Engineering group. The team’s mission is to help customers understand how to implement technically sound Salesforce solutions. Check out all of the resources that this team maintains on the Architect Core Resources page of Developer Force.